Finding an injective mapping with restrictions for values is NP-Complete

This post is about an interesting problem I recently tried to solve efficiently but ended up proving its NP-completeness. Informally, the problem is to find an injective mapping between two finite sets with certain, statically defined properties for values. For example, if and , we may want to find an injective mapping with restrictions , , , , . I find this problem of particular interest, because its nature is in my opinion very different compared to well-known NP-complete problems proven to be so by Karp. In this post we will visualize and formalize this problem, prove its NP-completeness and, finally, derive some interesting corollaries.

The problem

The above intuitive description of the problem can be equivalently formalized as follows:

Name: Restricted injective mapping

Input: A set as well as a collection of subsets of , i.e. for all .

Question: such that for all ?

This definition is equivalent to the brief description of the problem at the beginning of this post, since we can only focus on the restrictions in the target set (assuming that the elements in the source set are numbered) and it is possible to define such an injective mapping if and only if such that for all .

Example

Consider the following instance of the problem:

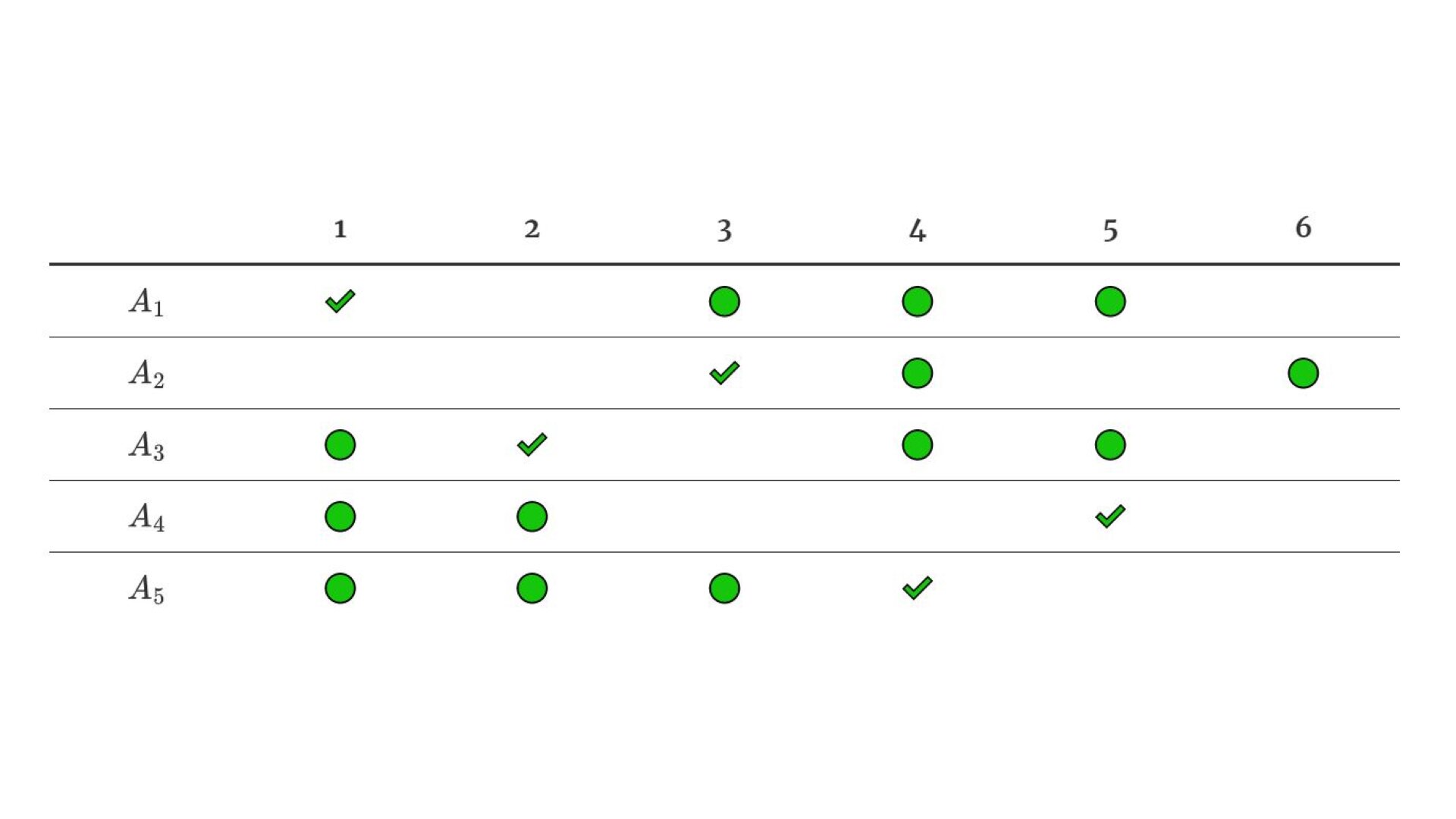

This example instance can be visualized with a table

| 1 | 2 | 3 | 4 | 5 | 6 | |

|---|---|---|---|---|---|---|

| E | E | E | E | |||

| E | E | E | ||||

| E | E | E | E | |||

| E | E | E | ||||

| E | E | E | E |

where the elements of the subsets are marked with E. In other words, we essentially want to find 5 E-cells in the above table such that no two cells that we pick have the same row or column. This instance of the problem has a solution, since we can pick, for example, the following cells marked with S:

| 1 | 2 | 3 | 4 | 5 | 6 | |

|---|---|---|---|---|---|---|

| S | E | E | E | |||

| S | E | E | ||||

| E | S | E | E | |||

| E | E | S | ||||

| E | E | E | S |

This visualization makes it also very clear why the formalization of the problem above is really equivalent to finding an injective mapping with restrictions for values — we can interpret the above table as a mapping where , , , , . Obviously,

- if some two cells that we choose are in the same column, then is not injective,

- by definition of a mapping, no two cells can have the same row

A special case of the problem

Every injective mapping with is also surjective and bijective. This leads us to an interesting special case of Restricted injective mapping, where we just add an additional constraint, that the amount of subsets in the collection must be equal to the cardinality of :

Name: Restricted bijective mapping

Input: A set of cardinality and a collection of subsets of , i.e. for all .

Question: such that for all ?

In other words and in light of the above visualization of an example instance of Restricted injective mapping with a table, this special case has an additional requirement that there are exactly as many rows as columns.

Proof idea

In order to prove -hardness of the Restricted bijective mapping problem above, we need to construct a reduction from an already-proven -complete problem. This reduction must somehow express the constraints of the original problem with the collection of subsets . Many -complete problems are graph-related and in graphs, a path with disjoint vertices seems to have very similar constraints compared to our problem. It may therefore be a good idea to let be the set of nodes in an undirected graph where, without loss of generality, and define for as follows:

For example, consider the following graph with vertices:

If there exists a cycle in that visits each node exactly once, or, in other words, a Hamiltonian circuit, like in this example where such a cycle is marked red, then, by definition of , it holds that

which means that such a reduction will lead to a yes-instance of Restricted bijective mapping. However, the converse is not true — a graph without a Hamiltonian circuit can still be reduced to a yes-instance of our problem. For example, the graph

gets reduced to

where it is possible to choose

such that for . It is clear that this problem only arises when the graph contains multiple vertex-disjoint cycles such that every node is part of exactly one such cycle.

The Hamiltonian circuit problem is a well-known -complete problem, which is formally defined as follows:

Name: HC

Input: Undirected graph

Question: Does there exist a hamiltonian circuit, i.e. a cycle that visits every vertex exactly once?

If we prove -hardness of HC with a reduction that cannot produce graphs with two or more vertex-disjoint cycles such that every node is in exactly one such cycle, and then prove -hardness of Restricted bijective mapping with a reduction that assumes that the input graph cannot be partitioned in vertex-disjoint cycles as mentioned above, then the composite reduction will prove -hardness of our problem. For a formal proof I will therefore need to reiterate a variant of the well-known proof of -completeness of HC emphasizing the fact that only graphs with the above mentioned constraint are being produced.

NP-completeness of HC

This problem is obviously in , because we can use a sequence of vertices as a certificate that can be verified in polynomial time by making sure that:

- this sequence of vertices is a path with disjoint vertices;

- the last vertex is connected with the first one;

- every node of the graph is in the sequence;

In order to prove -hardness, we will construct a polynomial-time reduction from the 3-SAT problem. Let be a graph with the following vertices and edges:

This gadget contains exactly the following two hamiltonian paths between and (we will drop the in node names if the graph containing that vertex can be inferred from the context):

This is the case because after choosing or after , the rest of the hamiltonian path is uniquely determined — , , , , and cannot be parts of the path since then there is no way to visit

- or ,

- or ,

- or ,

- or ,

- or ,

- or ,

respectively, and then reach .

Let be a 3-SAT formula with clauses and, without loss of generality, variables such that no variable appears positively and negatively in the same clause (otherwise remove such redundant clauses). We can construct a graph as follows:

- For each variable , add the gadget to . If , connect the current gadget with the gadget for the previous variable by creating an edge .

- For each clause , create a node .

- If a positive literal appears in clause , create edges and .

- If a negative literal appears in clause , create edges and .

- Create an edge .

Intuitively, a hamiltonian circuit in containing corresponds to a variable assignment where . Analogously, in the hamiltonian circuit means that it corresponds to a variable assignment with . By construction of and because of the fact that no variable in appears in a single clause both positively and negatively, node can be part of a hamiltonian circuit if an only if the hamiltonian circuit “sets” at least one literal in clause to true, as described above. Moreover, if is part of the hamiltonian circuit, then must be the next node after , because otherwise it is impossible to visit all nodes because of the way gadgets are connected. The same holds for the case when is part of the hamiltonian circuit — then must be the next node after . It follows from the above, that there exists a hamiltonian circuit iff is satisfiable.

We now need to argue why cannot be partitioned into at least two vertex-disjoint cycles. Assume this were not the case. Then there would exist a clause such that is part of a cycle that doesn’t visit every . If this cycle contains only and nodes within a single gadget , then there exists at least one in that gadget, which cannot be visited by any vertex-disjoint cycle because of the above argument that a gadget has exactly two hamiltonian paths, and we therefore get a contradiction. Otherwise the cycle must pass through at least two gadgets and and must visit the “bridge” between them, i.e either and or and , because otherwise at least one node out of would not be part of a vertex-disjoint cycle. But this means that there exists no path between and apart from meaning that those nodes are not part of a vertex-disjoint cycle. We therefore get a contradiction.

The reduction described above is obviously a polynomial-time reduction. This completes the proof of -completeness of HC.

NP-completeness of finding a bijective mapping

Restricted bijective mapping is in , because can be used as a certificate. It can be verified in polynomial time that and that for all .

In order to prove -hardness, we will use the idea described in the proof idea section and construct the following polynomial-time reduction from the 3-SAT problem:

- Given a

3-SATformula , construct a graph as described in the proof of -completeness ofHCabove. We can assume without loss of generality that as it is possible to rename vertices of efficiently. - Let .

- For each , compute .

- Finally, construct the collection of subsets .

It follows from the correctness of the reduction in the proof of -completeness of HC, that is satisfiable iff has a hamiltonian circuit. In order to prove the correctness of the present reduction, we therefore need to argue why has a hamiltonian circuit if and only if:

(): Assuming there exists a hamiltonian circuit , we can choose

distinct values from .

(): Let such that for . We can define the following mapping:

It follows from the fact that values are pairwise distinct, that is injective. Moreover, is bijective as the set of vertices is finite. As we argued in the np-completeness of hc section, cannot be partitioned into more than one vertex-disjoint cycle. This means that:

However, because is bijective and each application of leads to a new value in , it holds that:

It follows from the above two statements that

and is the minimal number of times must be applied to reach starting in . therefore contains

meaning that there exists a hamiltonian circuit

This completes the proof.

Corollaries

NP-completeness of finding an injective mapping

Restricted injective mapping is obviously in .

The -hardness of the present problem follows directly from the -hardness of Restricted bijective mapping as we can construct a trivial reduction from the latter problem that just passes the instance without any changes.

Counting the amount of injective mappings is NP-hard

Consider an instance of Restricted injective mapping, concretely a set and a collection of subsets of . It may be interesting not only to determine whether we can choose such that for all , but also to count the amount of such possibilities and to assess the hardness of this combinational problem. With the inclusion–exclusion principle we can count the amount of possibilities of choosing such that for all . Let . To do that, we first define:

The above amount of possibilities of choosing the wrong tuples that still satisfy the condition for is equal to:

This formula has terms and, given the value of , we can calculate the amount of possibilities we are interested in efficiently using the following formula:

For these reasons, the calculation of is the performance bottleneck of this problem. We can calculate using

where is the set of indices for the set that come up in the formula for , but this approach would unfortunately cause exponential blowup. The key question I now want to address is whether it may still be somehow possible to calculate more efficiently.

The problem

Name: Restricted injective mapping count

Input: A set , collection of subsets of and .

Question: Is the amount of possibilities of choosing such that for all at least ?

is -hard, since Restricted injective mapping can be reduced to it (with a reduction that simply sets and passes the instance of the problem without any changes). We can therefore conclude, that cannot be computed efficiently unless . Intuitively, the present combinational problem uses the full power of the inclusion-exclusion principle (see this post for similar reasoning).