Computing square roots modulo a prime with the Tonelli-Shanks algorithm

This post is about the problem of computing square roots modulo a prime number, a well-known problem in algebra and number theory. Nowadays multiple highly-efficient algorithms have been developed to solve this problem, e.g. Tonelli-Shanks, Cipolla’s algorithms. In this post we will focus on one of the most prominent algorithms, namely the Tonelli-Shanks algorithm, which is often described in a rather complicated way in the literature, although its derivation and the idea behind it is actually simple and elegant. The goal of this post is to showcase this beauty of the theory and to then show how the Tonelli-Shanks algorithm can be implemented in Python. We also discuss some interesting connections of this problem to cryptography.

Preliminaries

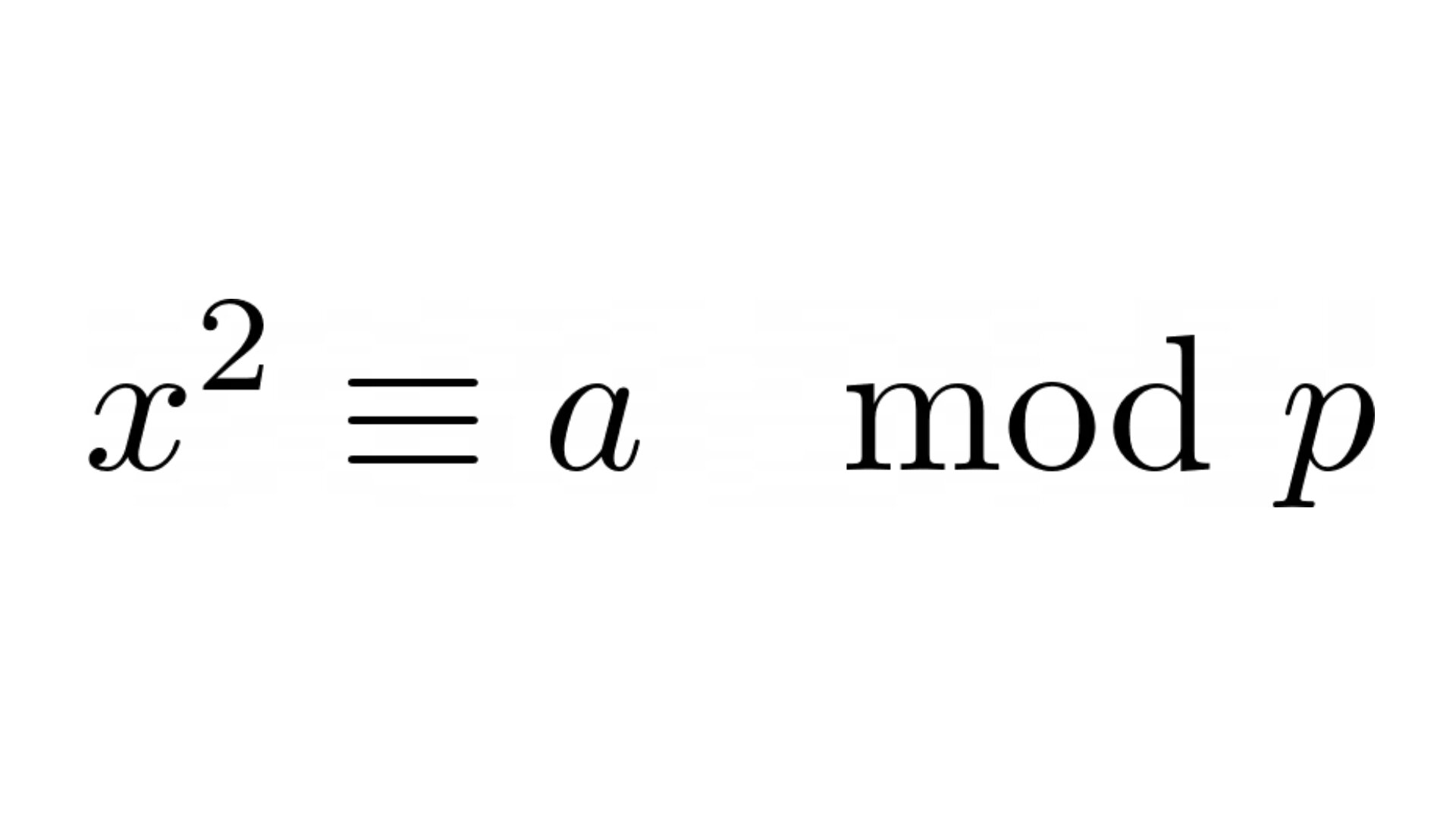

Formally, the problem of computing square roots modulo a prime is to solve the congruence

for , given an odd prime number and , which is also called a quadratic residue if the congruence above holds. We first discuss some theory we will rely on in the next section about the Tonelli-Shanks algorithm itself.

Let be the squaring endomorphism defined as follows

(recall that an endomorphism is a homomorphism of an algebraic structure to itself, it is an easy exercise to verify that is indeed an endomorphism). Observe that , because every element of the kernel is a root of the polynomial, which cannot have more than two roots because of its degree, meaning that and are its only roots. Let

be the set of all squares modulo . In the following lemma, we derive a formula for the exact number of squares modulo :

Lemma: Let be an odd prime. Then .

Proof: By the fundamental homomorphism theorem, there exists an isomorphism

Since all cosets have equal cardinality,

This completes the proof.

Another major tool for characterizing squares modulo is the Legendre symbol defined as follows

where and is a prime. If , then we can think of as of an “indicator” variable outputting if holds for some and otherwise. In fact, the Legendre symbol can be computed efficiently via the Euler’s criterion formulated in the following theorem.

Theorem (Euler): Let and is an odd prime. Then:

Proof: If , then the statement holds trivially. We thus focus only on the case when . By Fermat’s little theorem, it holds that

which is equivalent to

meaning that

Since we are working over the finite field , there cannot exist non-trivial divisors of zero, so it follows that modulo . Thus, the following mapping

is a well-defined homomorphism of groups. Moreover, is actually an epimorphism (i.e, is a surjective homomorphism), which can be shown as follows:

, because .

, because is a cyclic group meaning that its generator cannot have order less than . Thus, , so it follows that .

In other words, we have just shown that . At this point, to prove the theorem it suffices to show that . The inclusion is easy: if , i.e., , then

where all computations are modulo and the last equality holds by Fermat’s little theorem. With proven, since we are working over the finite field it suffices to show that in order to prove that . By the previous lemma, , so it suffices to prove that . Indeed, since by the fundamental homomorphism theorem

and all cosets are of equal cardinality,

Solving for yields

This completes the proof. Having done this preparatory work, we now discuss and implement the Tonelli-Shanks algorithm.

Computing square roots modulo a prime

First observe that since is a prime, is a field and hence the polynomial has exactly two roots. It follows that it suffices to compute just one root of , because it is trivial to obtain the other one by simply performing negation modulo . From now on, all computation will be modulo if not stated otherwise. We also assume that , or, equivalently, that , because it is trivial to compute the square root of zero.

The core idea behind the Tonelli-Shanks algorithm is to make use of the fact that if for some odd , then

and hence is a square root of . By the Euler’s theorem of the previous section above, we know that holds if and only if is a square modulo : if , then the algorithm can directly identify that the given number is not a square and terminate accordingly. Thus, intuitively is a “good” initial value to start with while searching for an odd satisfying . The remaining ideas are best shown by looking at how the algorithm works on a concrete example.

Example

Let and . , because . We set . Unfortunately, we cannot compute the square root of directly using the formula above, because is even. We thus divide by as long as possible and hope that at some point will become odd such that still holds:

Unfortunately, already after the first division of by two we get , but we want to have one on the right-hand side. The idea is to pick some , because by Euler’s Theorem (see above)

It thus holds that

Let . We now run the algorithm recursively to compute the square root of , because then we can recover the square root of by multiplying the square root of with . We repeatedly divide by two and recompute in the same way we did above:

Again, already after the very first division of by two we obtain . We thus again write

and determine recursively the square root of :

We now finally have the desired situation that is odd and , so we can compute one of the square roots of :

We now recall that , so it follows that the square root of is

Similarly, since , it follows that

Hence, the square roots of modulo are , that is, and .

The Tonelli-Shanks algorithm

We now state the Tonelli-Shanks algorithm in general:

Set . If , then return “not a square”, because is not a square modulo by Euler’s theorem (see above) and is equal to the Legendre symbol.

Otherwise we have that . Set , so that

At this point we have the invariant that for . While is even and , set and update .

If , then the square root of is by the above discussion, since is odd.

Otherwise it holds that for , where . Choose an arbitrary non-square by repeatedly picking uniformly at random until .

Run the same algorithm recursively from step 3 with the variable set to and other variables left unchanged. This establishes the invariant of step 3, because

- Let be the square root of obtained from the recursive call. We are interested in computing such that (interpret and as new variables, not as operations). Observe that

- Bringing to the left side yields

- Note that we have used the fact that here. It follows that is a square root of modulo .

We now argue why this algorithm always terminates and is correct. Observe that is changed throughout the algorithm in a way which ensures that always holds. This is the case, because are the only square roots of modulo and in every step we divide the exponent only by two. The case when is reduced to the case when but for a different , such that the square root for the original can be recovered from the square root of the new . Finally, observe that the algorithm either terminates directly because holds for an odd , or gets increased by at least one and hence gets divided by a power of two greater than one. This guarantees termination, because iterative division of by two will at some point yield an odd number whereupon the algorithm will terminate.

We now make a few additional remarks about the Tonelli-Shanks algorithm.

If is odd or, equivalently, if , then the algorithm will not make any recursive calls and output the square root of .

The loop in step 3 of the algorithm can be optimized by replacing it with a binary search algorithm for finding an such that . If during such binary search, we have , then we continue searching for smaller values of . If , then this means that we have divided too many times, so it follows that binary search is to be continued for larger values of . Although this optimization is beautiful theoretically, in practice it may only give a very tiny performance gain, if any.

The non-square used in the algorithm needs to be found and computed only once. During every recursive call, the same value of can be reused to deal with the situation. This implies that needs to be computed only once, so the computation of the value can be optimized.

As regards the expected time needed to find a non-square via guessing, since exactly half of the elements of are non-squares, two is the expected number of times we need to generate uniformly at random and to test whether it is a non-square or not.

The expected running time of the algorithm is , if we assume that multiplication modulo is a constant-time operation.

Implementation in Python

In this section I would like to show how the Tonelli-Shanks algorithm discussed above can be implemented in Python. We will need the following utility algorithms:

The extended Euclidean algorithm for computing inverses modulo

Fast exponentiation (by squaring) algorithm

I will reuse the implementation of the extended Euclidean algorithm I described and proved correct in this post. We are now ready to dive into the code:

# file: "tonellishanks.py"

import random

def power_modulo(a: int, b: int, n: int) -> int:

""" Computes a ^ b mod n """

result = 1

while b != 0:

if b % 2 == 1:

# b odd

result = (result * a) % n

a = (a * a) % n

b >>= 1

return result

def extended_gcd(a: int, b: int) -> (int, int, int):

# optional check

if a == 0:

return b, 0, 1

# without this check the first iteration will divide by zero

if b == 0:

return a, 1, 0

un_prev = 1

vn_prev = 0

un_cur = 0

vn_cur = 1

while True:

qn = a // b

new_r = a % b

a = b

b = new_r

if b == 0:

return a, un_cur, vn_cur

# Update coefficients

un_new = un_prev - qn * un_cur

vn_new = vn_prev - qn * vn_cur

# Shift coefficients

un_prev = un_cur

vn_prev = vn_cur

un_cur = un_new

vn_cur = vn_new

def inverse_modulo(a: int, n: int) -> int:

_, b, _ = extended_gcd(a, n)

return b % n

def legendre_symbol(a: int, p: int, /) -> int:

return power_modulo(a, (p - 1) >> 1, p)

def _choose_b(p: int, /) -> int:

b = 2

while legendre_symbol(b, p) == 1:

b = random.randrange(2, p)

return b

def _tonelli_shanks_recursive(a: int, k: int, p: int, b: int, b_inverse: int, /):

"""

Computes a square root of a modulo prime p

:param a: the number to take the square root of

:param k: positive integer, such that a^m = 1 (mod p) where m = (p-1)/(2^k)

:param p: odd prime p modulo which we are working

:param b: an arbitrary non-square modulo p

:param b_inverse: the inverse of b modulo p, i.e., b * b_inverse = 1 (mod p)

:return: one of the square roots of a modulo p (the other can be obtained via negation modulo p)

"""

m = (p - 1) >> k

a_m = 1

while m % 2 == 0 and a_m == 1:

m >>= 1

k += 1

a_m = power_modulo(a, m, p)

if a_m == p - 1:

# a^m = -1 (mod p)

b_power = 1 << (k - 1)

b_power_half = 1 << (k - 2)

a_next = (a * power_modulo(b, b_power, p)) % p

a_next_root = _tonelli_shanks_recursive(a_next, k, p, b, b_inverse)

a_root = a_next_root * power_modulo(b_inverse, b_power_half, p)

return a_root % p

# we now handle the case when m is odd

# this case is easy, a^((m+1)/2) is a square root of a

return power_modulo(a, (m + 1) >> 1, p)

def tonelli_shanks(a: int, p: int, /) -> int | None:

"""

Computes a square root of a modulo prime p

:param a: the number to take the square root of

:param p: odd prime p modulo which we are working

:return: one of the square roots of a modulo p (the other can be obtained via negation modulo p)

"""

if legendre_symbol(a, p) != 1:

# a is not not a square modulo p

return None

b = _choose_b(p)

b_inverse = inverse_modulo(b, p)

return _tonelli_shanks_recursive(a, 1, p, b, b_inverse)

Recall that performing a left bitwise shift (<<) is essentially a fast way to multiply the left operand with two raised to the right operand value’s power. Similarly, a right bitwise shift (>>) divides the left operand by two raised to the right operand value’s power.

Running the implementation on the example of the previous section, that is, for and yields the expected correct roots:

$ python3 -i tonellishanks.py

>>> tonelli_shanks(8, 17)

5

>>> tonelli_shanks(8, 17)

12

Note: The above implementation is a simplified version of my thoroughly tested implementation of the algorithm available in this GitHub repository.

Related hard problems

I find the problem of computing square roots modulo a prime especially interesting because it is in some sense very “close” to problems where there are very good reasons to believe that they are hard in the complexity-theoretic sense. For example, the same problem is not known to be efficiently solvable if we are working modulo a Blum number, that is, if we are interested in solving

for , where are prime numbers such that . Moreover, this modified problem is at least as hard as the problem of factoring Blum numbers, because given an oracle for computing the four square roots for , one could factor a given Blum number efficiently as follows:

Choose a number uniformly at random (can be implemented by simply choosing a random number between and inclusively, because if , then we have already effectively guessed the factor of )

Use the (hypothetical) oracle to compute some square root of modulo .

If or , then we have failed to factor .

Otherwise it holds that and the factors of are and .

Since is chosen at random and we only pass to the oracle, the oracle’s answer is independent of . It is not hard to show that every quadratic residue modulo a Blum number has exactly 4 distinct roots (using the Chinese Remainder Theorem), two of them are and which do not yield a factor of in the algorithm above. It follows that the probability of successfully factoring a number is , meaning that the algorithm above is a randomized polynomial-time Turing reduction. Moreover, the success probability of the above algorithm can actually be significantly amplified, but this is outside of the scope of this post. It is also worth noting that the above security reduction is useful in cryptography. For example, the Rabin cryptosystem’s security heavily relies on the assumption that computing square roots modulo a Blum number is computationally hard, which as we have shown above is implied by the assumption that factoring Blum numbers is hard, which is a plausible hardness assumption to date. If Rabin’s cryptosystem did all computations modulo a prime instead of a Blum number, then the cipher would be easily breakable, since it would then be possible to decrypt plaintexts using the Tonelli-Shanks algorithm.